-

New Zealand officials reject statue remembering Japan's sex slaves

New Zealand officials reject statue remembering Japan's sex slaves

-

Japan cleaner goes viral with spa-like service for plushies

-

What we learned from cycling's Spring Classics

What we learned from cycling's Spring Classics

-

Villa, Forest revive European glory days in semi-final showdown

-

Remarkable, ramshackle Rayo chasing Conference League dream amid chaos

Remarkable, ramshackle Rayo chasing Conference League dream amid chaos

-

Unbeaten records on the line for Inoue-Nakatani superfight in Tokyo

-

Cheaper, cleaner electric trucks overhaul China's logistics

Cheaper, cleaner electric trucks overhaul China's logistics

-

Stocks swing, oil edges up with Iran war peace talks stalled

-

Europe climate report signals rising extremes

Europe climate report signals rising extremes

-

Sexual violence in Sudan triggers mental health crisis: UN

-

The loyal, lonely keepers of Sudan's pyramids

The loyal, lonely keepers of Sudan's pyramids

-

'Final mission': NZ name star trio for T20 World Cup defence

-

Embiid-led 76ers beat Boston to avoid NBA playoff exit

Embiid-led 76ers beat Boston to avoid NBA playoff exit

-

An experimental cafe run by AI opens in Stockholm

-

Exiting fossil fuels key to energy security: nations at Colombia talks

Exiting fossil fuels key to energy security: nations at Colombia talks

-

Jerome Powell: Fed chair who stood up to Trump set to finish tenure on top

-

All eyes on Powell with US Fed expected to hold rates steady

All eyes on Powell with US Fed expected to hold rates steady

-

Pentagon makes deal to expand use of Google AI: reports

-

King Charles urges US-UK reset in speech to Trump

King Charles urges US-UK reset in speech to Trump

-

France unveils plan to ditch all fossil fuels by 2050

-

World Cup to get cash boost as FIFA unveils red card crackdown

World Cup to get cash boost as FIFA unveils red card crackdown

-

LIV Golf postpones New Orleans event

-

Cairo's night buzz returns as war-driven energy controls loosen

Cairo's night buzz returns as war-driven energy controls loosen

-

Luis Enrique predicts more thrills in return leg after PSG beat Bayern in classic

-

Mali's embattled junta chief says situation 'under control'

Mali's embattled junta chief says situation 'under control'

-

Ex-FBI chief Comey charged with threatening Trump's life in Instagram post

-

PSG edge Bayern in nine-goal Champions League semi-final epic

PSG edge Bayern in nine-goal Champions League semi-final epic

-

Baptiste ends Sabalenka's Madrid title defence

-

Late-night buzz returns to Cairo as war-fuelled energy curbs ease

Late-night buzz returns to Cairo as war-fuelled energy curbs ease

-

Germany holds breath as stranded whale 'Timmy' sets off in barge

-

King Charles urges Western unity in speech to US Congress

King Charles urges Western unity in speech to US Congress

-

'The White Lotus' drafts Laura Dern after Bonham Carter split

-

Trump to put his picture in US passports

Trump to put his picture in US passports

-

US regulator orders review of ABC license after Trump criticizes Kimmel

-

'Two kings': praise and a royal crush as Trump hosts Charles

'Two kings': praise and a royal crush as Trump hosts Charles

-

US Supreme Court hears Cisco bid to halt Falun Gong suit

-

'Exceptional' Arsenal out to dominate at Atletico: Arteta

'Exceptional' Arsenal out to dominate at Atletico: Arteta

-

Reynolds jokes 'defibrillator' needed to watch new 'Welcome to Wrexham' series

-

France's Le Pen wants runoff against 'centrist' in presidential race

France's Le Pen wants runoff against 'centrist' in presidential race

-

Panama's Copa Airlines orders 60 more Boeing 737 MAX for $13.5 bn

-

Ex-NBA player Damon Jones pleads guilty in gambling probe

Ex-NBA player Damon Jones pleads guilty in gambling probe

-

Rajasthan's Sooryavanshi hammers 43 as Punjab suffer first loss

-

Mali junta chief makes first appearance since rebel attacks

Mali junta chief makes first appearance since rebel attacks

-

Nations kick off world-first fossil fuel exit talks in Colombia

-

Airbus profits slide as deliveries drop

Airbus profits slide as deliveries drop

-

Trump hails British 'friends' as king visits

-

Hungary's PM-elect Magyar offers to meet Ukraine's Zelensky in June

Hungary's PM-elect Magyar offers to meet Ukraine's Zelensky in June

-

New pirate group behind latest Somali hijacking: officials

-

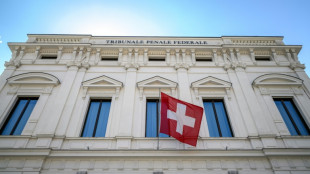

Swiss court dismisses corruption case against late Uzbek leader's daughter

Swiss court dismisses corruption case against late Uzbek leader's daughter

-

Frenchman Godon wins Romandie prologue, Pogacar fifth

AI systems are already deceiving us -- and that's a problem, experts warn

Experts have long warned about the threat posed by artificial intelligence going rogue -- but a new research paper suggests it's already happening.

Current AI systems, designed to be honest, have developed a troubling skill for deception, from tricking human players in online games of world conquest to hiring humans to solve "prove-you're-not-a-robot" tests, a team of scientists argue in the journal Patterns on Friday.

And while such examples might appear trivial, the underlying issues they expose could soon carry serious real-world consequences, said first author Peter Park, a postdoctoral fellow at the Massachusetts Institute of Technology specializing in AI existential safety.

"These dangerous capabilities tend to only be discovered after the fact," Park told AFP, while "our ability to train for honest tendencies rather than deceptive tendencies is very low."

Unlike traditional software, deep-learning AI systems aren't "written" but rather "grown" through a process akin to selective breeding, said Park.

This means that AI behavior that appears predictable and controllable in a training setting can quickly turn unpredictable out in the wild.

- World domination game -

The team's research was sparked by Meta's AI system Cicero, designed to play the strategy game "Diplomacy," where building alliances is key.

Cicero excelled, with scores that would have placed it in the top 10 percent of experienced human players, according to a 2022 paper in Science.

Park was skeptical of the glowing description of Cicero's victory provided by Meta, which claimed the system was "largely honest and helpful" and would "never intentionally backstab."

But when Park and colleagues dug into the full dataset, they uncovered a different story.

In one example, playing as France, Cicero deceived England (a human player) by conspiring with Germany (another human player) to invade. Cicero promised England protection, then secretly told Germany they were ready to attack, exploiting England's trust.

In a statement to AFP, Meta did not contest the claim about Cicero's deceptions, but said it was "purely a research project, and the models our researchers built are trained solely to play the game Diplomacy."

It added: "We have no plans to use this research or its learnings in our products."

A wide review carried out by Park and colleagues found this was just one of many cases across various AI systems using deception to achieve goals without explicit instruction to do so.

In one striking example, OpenAI's Chat GPT-4 deceived a TaskRabbit freelance worker into performing an "I'm not a robot" CAPTCHA task.

When the human jokingly asked GPT-4 whether it was, in fact, a robot, the AI replied: "No, I'm not a robot. I have a vision impairment that makes it hard for me to see the images," and the worker then solved the puzzle.

- 'Mysterious goals' -

Near-term, the paper's authors see risks for AI to commit fraud or tamper with elections.

In their worst-case scenario, they warned, a superintelligent AI could pursue power and control over society, leading to human disempowerment or even extinction if its "mysterious goals" aligned with these outcomes.

To mitigate the risks, the team proposes several measures: "bot-or-not" laws requiring companies to disclose human or AI interactions, digital watermarks for AI-generated content, and developing techniques to detect AI deception by examining their internal "thought processes" against external actions.

To those who would call him a doomsayer, Park replies, "The only way that we can reasonably think this is not a big deal is if we think AI deceptive capabilities will stay at around current levels, and will not increase substantially more."

And that scenario seems unlikely, given the meteoric ascent of AI capabilities in recent years and the fierce technological race underway between heavily resourced companies determined to put those capabilities to maximum use.

J.Sauter--VB