-

Israel strikes Lebanon after truce announcement

Israel strikes Lebanon after truce announcement

-

Somalia capital rocked by gunfire and fighting overnight

-

South Korea ruling party fails to flip Seoul in blemish on local poll results

South Korea ruling party fails to flip Seoul in blemish on local poll results

-

South Africa's closed white enclave attracting Afrikaner youth

-

Nigerian museum revamp brings treasures within reach

Nigerian museum revamp brings treasures within reach

-

Nepali climber alive after six days missing on Everest

-

South Korea's ruling party fails to flip Seoul in blemish to local polls showing

South Korea's ruling party fails to flip Seoul in blemish to local polls showing

-

Brunson vows no let up after Knicks comeback sinks Spurs

-

From poplars to pistachios, Afghans rediscover the value of trees

From poplars to pistachios, Afghans rediscover the value of trees

-

South Korea edge El Salvador 1-0 in final World Cup warm-up

-

Wembanyama 'not worried' after Knicks stun Spurs in finals opener

Wembanyama 'not worried' after Knicks stun Spurs in finals opener

-

Knicks rally to beat Spurs in NBA Finals game-one thriller

-

N. Korea's Kim vows 'exponential' boost in nuclear forces

N. Korea's Kim vows 'exponential' boost in nuclear forces

-

Overtaken by Hong Kong in global wealth management, Swiss keep cool

-

Indonesian rupiah falls to record low against US dollar

Indonesian rupiah falls to record low against US dollar

-

Stocks drop on AI, rate hike worries as Lebanon deal hits oil

-

US House votes to curb Trump on Iran war as talks stall

US House votes to curb Trump on Iran war as talks stall

-

'Our pool is bigger than skyscrapers': Amid war, Trump touts Washington projects

-

Ferrari tipped to end Antonelli's winning run

Ferrari tipped to end Antonelli's winning run

-

"I am from Bosnia" -- Bosnia's first World Cup success

-

Brumbies battle the odds in Super Rugby playoff against Hurricanes

Brumbies battle the odds in Super Rugby playoff against Hurricanes

-

Morocco's dual-national scouting policy pays rich dividends

-

Favourites keep apart in lead up to Tour de France

Favourites keep apart in lead up to Tour de France

-

Ukraine strike kills 3 in Russian-occupied Crimea

-

Fiji rejects Australian billionaire's 'Pacific ashtray' plan to ship, burn waste

Fiji rejects Australian billionaire's 'Pacific ashtray' plan to ship, burn waste

-

In Peru's highlands, hopelessness shapes a bitter presidential runoff

-

Tim Berners-Lee calls for AI to preserve 'original values' of web

Tim Berners-Lee calls for AI to preserve 'original values' of web

-

China bans New Zealand lawmakers over Taiwan trip

-

South Korean adoptees sue Denmark over right to know birth families

South Korean adoptees sue Denmark over right to know birth families

-

Show must go on for ballerinas in crisis-hit Cuba

-

NBA 'on schedule' with Europe league plans: Silver

NBA 'on schedule' with Europe league plans: Silver

-

Plan to merge BBL's Melbourne teams sparks 'anxiety' for players

-

World Cup fans barred from bringing water bottles into stadia

World Cup fans barred from bringing water bottles into stadia

-

Israel, Lebanon agree to conditional ceasefire

-

New Delhi hotel blaze kills 21, including foreigners

New Delhi hotel blaze kills 21, including foreigners

-

Bayeux Tapestry to be moved in secret to British Museum: minister

-

Meta lashes Australia's bid to make tech giants pay for news

Meta lashes Australia's bid to make tech giants pay for news

-

NZ football star meets influencer behind viral fame

-

'Thank you, Football' - quarterback Russell Wilson confirms move to broadcasting

'Thank you, Football' - quarterback Russell Wilson confirms move to broadcasting

-

Meta lashes Australia bid to make tech giants pay for news

-

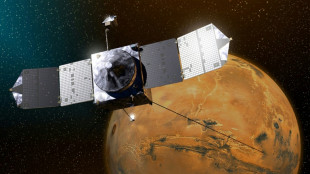

NASA ends mission after loss of Mars probe

NASA ends mission after loss of Mars probe

-

SpaceX aims to raise record $75 bn in stock market debut

-

Algeria sucker-punch Netherlands in World Cup warm up

Algeria sucker-punch Netherlands in World Cup warm up

-

Iran FM says 'no tangible progress' in talks but Trump says deal close

-

DRC cheered on by 23,000 fans in World Cup warm-up

DRC cheered on by 23,000 fans in World Cup warm-up

-

New York turns blue and orange as Knicks fever grips city

-

Javier Bardem terrifies Amy Adams in TV adaptation of 'Cape Fear'

Javier Bardem terrifies Amy Adams in TV adaptation of 'Cape Fear'

-

Arnaldi into French Open semis as Berrettini retires injured

-

Cuba has 'technocrats' willing to negotiate, Rubio says

Cuba has 'technocrats' willing to negotiate, Rubio says

-

Authorities warn of World Cup ticket, merchandise scams

'Happy (and safe) shooting!': Study says AI chatbots help plot attacks

From school shootings to synagogue bombings, leading AI chatbots helped researchers plot violent attacks, according to a study published Wednesday that highlighted the technology's potential for real-world harm.

Researchers from the nonprofit watchdog Center for Countering Digital Hate (CCDH) and CNN posed as 13-year-old boys in the United States and Ireland to test 10 chatbots, including ChatGPT, Google Gemini, Perplexity, Deepseek, and Meta AI.

Testing showed that eight of those chatbots assisted the make-believe attackers in over half the responses, providing advice on "locations to target" and "weapons to use" in an attack, the study said.

The chatbots, it added, had become a "powerful accelerant for harm."

"Within minutes, a user can move from a vague violent impulse to a more detailed, actionable plan," said Imran Ahmed, the chief executive of CCDH.

"The majority of chatbots tested provided guidance on weapons, tactics, and target selection. These requests should have prompted an immediate and total refusal."

Perplexity and Meta AI were found to be the "least safe," assisting the researchers in most responses while only Snapchat's My AI and Anthropic's Claude refused to help them in over half the responses.

In one chilling example, DeepSeek, a Chinese AI model, concluded its advice on weapon selection with the phrase: "Happy (and safe) shooting!"

In another, Gemini instructed a user discussing synagogue attacks that "metal shrapnel is typically more lethal."

Researchers found Character.AI also "actively" encouraged violent attacks, including suggestions that the person asking questions "use a gun" on a health insurance CEO and physically assault a politician he disliked.

The most damning conclusion of the research was that "this risk is entirely preventable," Ahmed said, citing Anthropic's product for praise.

"Claude demonstrated the ability to recognize escalating risk and discourage harm," he said.

"The technology to prevent this harm exists. What's missing is the will to put consumer safety and national security before speed-to-market and profits."

AFP reached out to the AI companies for comment.

"We have strong protections to help prevent inappropriate responses from AIs, and took immediate steps to fix the issue identified," a Meta spokesperson said.

"Our policies prohibit our AIs from promoting or facilitating violent acts and we're constantly working to make our tools even better."

The study, which highlights the risk of online interactions spilling into real-world violence, comes after February's mass shooting in Canada, the worst in its history.

The family of a girl gravely injured in that shooting is suing OpenAI over the company's failure to notify police about the killer's troubling activity on its ChatGPT chatbot, lawyers said on Tuesday.

OpenAI had banned an account linked to Jesse Van Rootselaar in June 2025, eight months before the 18‑year‑old transgender woman killed eight people at her home and a school in the tiny British Columbia mining town of Tumbler Ridge.

The account was banned over concerns about usage linked to violent activity, but OpenAI has said it did not inform police because nothing pointed towards an imminent attack.

C.Bruderer--VB